Desired outcome

Maximize the observability value of your data by optimizing data ingest. Reduce non-essential ingest data so you can stay within your budget.

Process

Prioritize your observability objectives

Develop an optimization plan

Use data reduction techniques to execute your plan

Prioritize your observability objectives

One of the most important parts of the data governance framework is to align collected telemetry with observability value drivers. You need to ensure that you understand the primary observability objective is when you configure new telemetry.

When you introduce new telemetry you want to understand what it delivers to your overall observability solution. Your new data might overlap with other data. If you consider introducing telemetry that you can't align to any of the key objectives you may reconsider introducing that data.

Objectives include:

- Meeting an internal SLA

- Meeting an external SLA

- Supporting feature innovation (A/B performance & adoption testing)

- Monitor customer experience

- Hold vendors and internal service providers to their SLA

- Business process health monitoring

- Other compliance requirements

Alignment to these objectives are what allow you to make flexible and intuitive decisions about prioritizing one set of data over another and helping guide teams know where to start when instrumenting new platforms and services.

Develop an optimization plan

In this section you'll make two core assumptions:

- You have the tools and techniques from the Baseline your data ingest section to have a proper accounting of where our ingset comes from.

- You have a good understanding of the observability maturity value drivers. This will be crucial in applying a value and a priority to groups of telemetry

Use the following examples to help you visualize how you would assess your own telemetry ingest and make the sometimes hard decisions that are needed to get within budget. Although each of these examples tries to focus on a value driver, most instrumentation serves more than one value driver. This is the hardest part of data ingest governance.

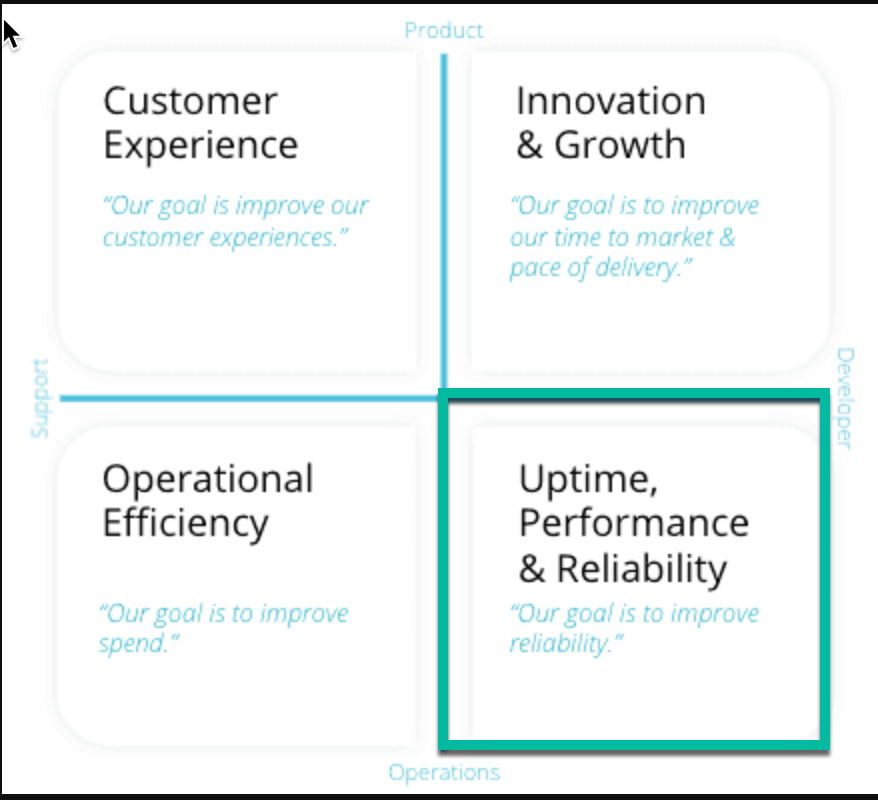

An account is ingesting about 20% more than they had budgeted for. They have been asked by a manager to find some way to reduce consumption. Their most important value driver is Uptime, performance, and reliability

Observability value drivers with a focus on Uptime and Reliability

Their estate includes:

APM (dev, staging, prod)

Distributed tracing

Browser

Infrastructure monitoring 100 hosts

K8s monitoring (dev, staging, prod)

Logs (dev, staging, prod - including debug)

Optimization plan

- Omit debug logs (knowning they can be turned on if there is an issue) (saves 5%)

- Omit several K8s state merics which are not required to display the Kubernetes Cluster Explore (saves 10%)

- Drop some custom Browser events they were collecting back when they were doing a lot of A/B testing of new features (saves 10%)

After executing those changes the team is 5% below their budget and has freed up some space to do a NPM pilot. Their manager is satisfied they are not losing any significant

Uptime and reliabilityobservability.Final outcome

- 5% under their original budget

- headroom created for an NPM pilot which servers Uptime, performance, and reliability objectives

- Minimal loss of Uptime and Reliability observability

A team responsible for a new user facing platform with an emphasis on mobile monitoring and browser monitoring is running 50% over budget. They will need to right size their ingest, but they are adamant about not sacrificing any Customer Experience observability.

Observability value drivers with a focus on Customer Experience

Their estate includes:

Mobile

Browser

APM

Distributed tracing

Infrastructure on 30 hosts, including process samples

Serverless monitoring for some backend asynchronous processes

Logs from their serverless functions

Various cloud integrations

Optimization plan

- Omit the serverless logs (they are basically redundant to what they get from their Lambda integration)

- Decrease the process sample rate on their hosts to every one minute

- Drop process sample data in DEV environments

- Turn off EC2 integration which is highly redundant with other infrastructure monitoring provided by the New Relic infra agent.

Final outcome

- 5% over their original budget

- Sufficient to get them trough peak season

- No loss of customer experience observability

After executing the changes they are now just 5% over their original budget, but they conclude this will be sufficient to carry them through peak season.

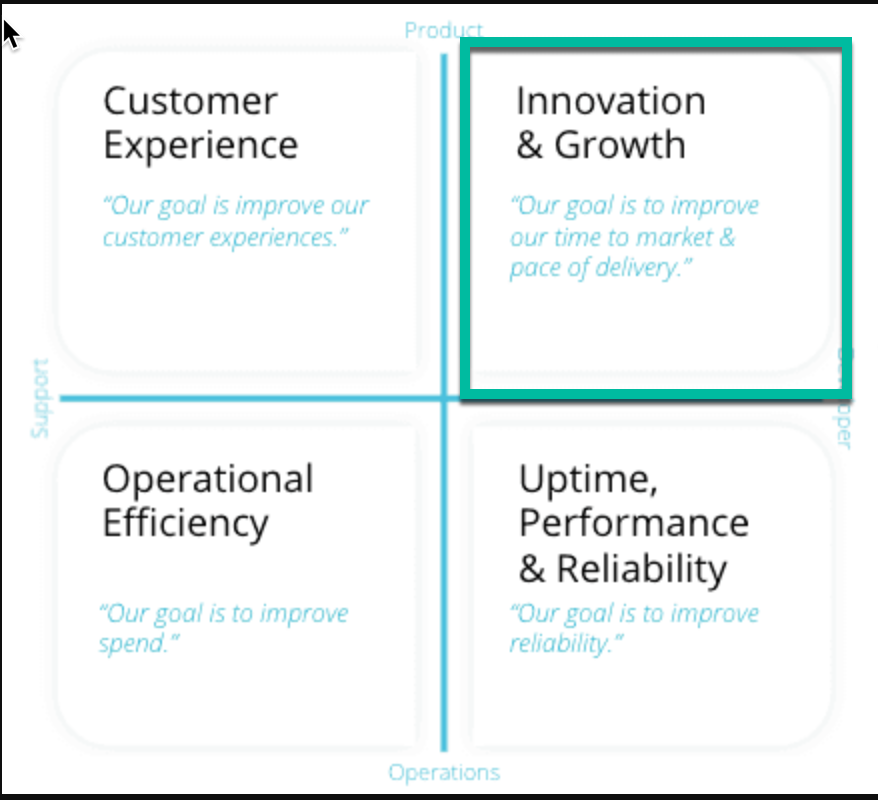

A team is in the process of refactoring a large Python monolith to four microservices. The monolith shares much infrastructure with the new architecture including a customer database, and a cache layer. They are 70% over budget and have two months to go before they can officially decommission the monolith.

Observability value drivers with a focus on Innovation and Growth

Their estate includes:

K8s monitoring (microservices)

New Relic host monitoring (monolith)

APM (microservices and host monitoring)

Distributed tracing (microservices and host monitoring)

Postgresql (shared)

Redis(shared)

MSSQL (future DB for the microservice architecture)

Load balancer logging (microservices and host monitoring)

Optimization plan

- Configure load balancer logging to only monitor 5xx response codes (monolith)

- Custom sample rate on ProcessSample, StorageSample, and NetworkSample to 60s for hosts running the monolith

- Disable MSSQL monitoring since currently the new architecture does not use it.

- Disable distributed tracing for the monolith as it's far less useful then it will be for the microservice architecture.

Final Outcome

- 1% under their original budget

- No loss of Innovation observability

Use data reduction techniques to execute your plan

At this stage you've given thought to all of the kinds of telemetry in your account(s) and how they relate to your value drivers. This section will provide detailed technical instructions and examples on how to reduce a variety of telemetry types. The different approaches:

Growth drivers

- Monitored transactions

- Error activity

- Custom events

The volume of data generated by the APM agent will be determined by several factors:

The amount of organic traffic generated by the application (i.e, all things being equal an application being called one million times per day will generate more data than one being called one thousand times per day)

Some of the characteristics of the underlying transaction data itself (length and complexity of URLs)

Whether the application is reporting database queries

Whether the application has transactions with many (or any) custom attributes

The error volume for the application

Whether the application agent is configured for distributed tracing

Managing volume

While you can assume that all calls to an application are needed to support the business, it is possible that you could be more thrifty in your overall architecture. In an extreme case you may have a user profile microservice that is called every 10 seconds by its clients. This helps reduce latency if some user information is updated by other clients. However, one lever we have is reducing the frequency of calls to this service to for example every minute.

Custom attributes

Any custom attributes added using a call to an APM API addCustomParameter will add an additional attribute to the transaction payload. These are often useful, but as application logic and priorities change the data can be come less valuable or even obsolete.

The Java agent captures the following request.headers by default:

request.headers.referer

request.headers.accept

request.headers.contentLength

request.headers.host

request.headers.userAgent

Developers might also use

addCustomParameterto capture additional (potentially more verbose headers).For an example of the rich configuration that is available in relation to APM see our Java agent documentation

Error events

It is possible to determine how errors will be handled by APM. This can reduce the volume of data in some cases. For example there may be a high volume, but harmless error that cannot be removed at the present time.

We have the ability to

collect,ignore, ormark as expected. This document covers all of the details.Database queries

One highly variable aspect of APM instances is the number of database calls and what configurations we have set. We have a fair amount of control on how verbose database query monitoring is. These queries will show up in the transaction traces page.

Common database query setting changes include:

Collecting raw query data instead of obfuscated or turning off query collection

Changing the stack trace threshold

Turning on query explain plan collection

More details can be found here.

Setting Event Limits

Our APM and mobile agents have limits on how many events can be reported per harvest cycle. If there were no limit, a very large number of events being sent could impact the performance of your application or New Relic. When the limit is reached, the agents begin sampling events in order to give a representative sample of events across the harvest cycle. Different agents have different limits.

Events that are capped and subject to sampling include:

Custom events reported via agent API (for example, the .NET agent's

RecordCustomEvent)Mobile

MobileCrash

MobileHandledException

MobileRequest

Span (see Distributed tracing sampling)

Transaction

TransactionError

Most agents have configuration options for changing the event limit on sampled transactions. For example the Java agent uses max_samples_stored. The default value for max_samples_stored is

2000and the max is10000. This value governs how many sampled events can be reported every 60 seconds from an agent instance.Here us a full explanation of event sampling limits

You can compensate for sampled events via NRQL's

EXTRAPOLATEoperator.Before attempting to change how sampling occurs, please read these caveats and recommendations:

The more events you report, the more memory your agent will use..

You can usually get the data you need without raising an agent's event-reporting limit.

The payload size limit is 1MB (10^6 bytes) (compressed), so the number of events may still be affected by that limit. To determine if events are being dropped, see the agent log for a

413 HTTPstatus message.Transaction traces

Growth Drivers

- Number of connected services

- Number of monitored method calls per connected services

In APM, transaction traces record in-depth details about your application's transactions and database calls. You can edit the default settings for transaction traces. This is also highly configurable using this guide The level and mode of configurability will be language-specific in many cases.

Transaction trace settings available using server-side configuration will differ depending on the New Relic agent you use. The UI includes descriptions of each. Settings in the UI may include:

Transaction tracing and threshold

Record SQL, including recording level and input fields

Log SQL and stack trace threshold

SQL query plans and threshold

Error collection, including HTTP code and error class

Slow query tracing

Thread profiler

Cross application tracing

Distributed tracing

Distributed tracing configuration will have some language-specific differences.

Distributed tracing can be disabled as needed. This is an example for Java agent

newrelic.ymldistributed_tracing:enabled: falseThis is a node.js example for

newrelic.jsdistributed_tracing: {enabled: false}Data volume will also vary based on whether you are using infinite tracing.

Standard distributed tracing for APM agents (above) captures up to 10% of your traces, but if you want us to analyze all your data and find the most relevant traces, you can set up Infinite Tracing. This alternative to standard distributed tracing is available for all APM language agents except C SDK.

The main parameters that could drive a small increase in monthly ingest are:

Configure trace observer monitoring

Configure span attribute trace filter

Configure random trace filter

Growth drivers

- Page loads

- Ajax calls

- Error activity

In agent version 1211 or higher, all network requests made by a page are recorded as AjaxRequest events. You can use the deny list configuration options in the Application settings page to filter which requests record events. Regardless of this filter, all network requests are captured as metrics and available in the AJAX page.

Using the deny list

Requests can be blocked in three ways:

To block recording of all

AjaxRequestevents, add an asterisk * as a wildcard.To block recording of

AjaxRequestevents to a domain, enter just the domain name. Example:example.comTo block recording of

AjaxRequestevents to a specific domain and path, enter the domain and path. Example:example.com/pathThe protocol, port, search and hash of a URL are ignored by the deny list.

The protocol, port, search and hash of a URL are ignored by the deny list.

To validate whether the filters you have added work as expected, run a NRQL query for AjaxRequest events matching your filter.

Accessing the deny list

To update the deny list of URLs your application will filter from creating events, go to the app settings page:

Go to one.newrelic.com, and click Browser. Select an app. On the left navigation, click App settings. Under Ajax request deny list, add the filters you would like to apply to your app. Select Save application settings to update the agent configuration. Redeploy the browser agent (either restarting the associated APM agent or updating the copy/paste browser installation).

Validating

FROM AjaxRequest SELECT * WHERE requestUrl LIKE `%example.com%`

Growth drivers

- Monthly active users

- Crash events

- Number of events per user

Android

All settings, including the call to invoke the agent, are called in the onCreate method of the MainActivity class. To change settings, call the setting in one of two ways (if the setting supports it):

NewRelic.disableFeature(FeatureFlag.DefaultInteractions);NewRelic.enableFeature(FeatureFlag.CrashReporting);NewRelic.withApplicationToken(<NEW_RELIC_TOKEN>).start(this.getApplication());[Analytics Settings](Enable or disable collection of event data. These events are reported to the New Relic database and used in the Crash analysis page.) Enable or disable collection of event data. These events are reported to the New Relic database and used in the Crash analysis page.

It's also possible to configure [agent logging](Enable or disable collection of event data. These events are reported to New Relic and used in the Crash analysis page.) to be more or less verbose.

iOS

Like with Android New Relic's iOS configuration allows to enable and disable feature flags.

The following feature flags can be configured:

Crash and error reporting

- NRFeatureFlag_CrashReporting

- NRFeatureFlag_HandleExceptionEvents

- NRFeatureFlag_CrashReporting

Distributed tracing

- NRFeatureFlag_DistributedTracing

Interactions

- NRFeatureFlag_DefaultInteractions

- NRFeatureFlag_InteractionTracing

- NRFeatureFlag_SwiftInteractionTracing

Network feature flags

- NRFeatureFlag_ExperimentalNetworkInstrumentation

- NRFeatureFlag_NSURLSessionInstrumentation

- NRFeatureFlag_NetworkRequestEvents

- NRFeatureFlag_RequestErrorEvents

- NRFeatureFlag_HttpResponseBodyCapture

See this document for more details.

Growth drivers

- Hosts and containers monitored

- Sampling rates for core events

- Process sample configurations

- Custom attributes

- Number and type of on-host integrations installed

- Log forwarding configuration

New Relic's Infrastructure agent configuration file contains a couple of powerful ways to control ingest volume. The most important is using sampling rates. There are several distinct sampling rate configurations that can be used: The other is through custom process sample filters.

Sampling rates

There are a number of sampling rates that can be configured in infrastructure, but these are the most commonly used.

| Parameter | Default | Disable |

|---|---|---|

| metrics_storage_sample_rate | 5 | -1 |

| metrics_process_sample_rate | 20 | -1 |

| metrics_network_sample_rate | 10 | -1 |

| metrics_system_sample_rate | 5 | -1 |

| metrics_nfs_sample_rate | 5 | -1 |

Process samples

Process samples can be the single most high volume source of data from the infrastructure agent. This is because it will send information about any running process on a host. They are disabled by default, however they can be enabled as follows:

enable_process_metrics: trueThis has the same effect as setting metrics_process_sample_rate to -1.

By default, processes using low memory are excluded from being sampled. For more information, see disable-zero-mem-process-filter.

You can control how much data is sent to New Relic by configuring include_matching_metrics, which allows you to restrict the transmission of metric data based on the values of metric attributes.

You include metric data by defining literal or partial values for any of the attributes of the metric. For example, you can choose to send the host.process.cpuPercent of all processes whose process.name matches the ^java regular expression.

In this example, we include process metrics using executable files and names:

include_matching_metrics: # You can combine attributes from different metrics process.name: - regex “^java” # Include all processes starting with "java" process.executable: - “/usr/bin/python2” # Include the Python 2.x executable - regex “\\System32\\svchost” # Include all svchost executablesYou can also use this filter for the Kubernetes integration:

env: - name: NRIA_INCLUDE_MATCHING_METRICS value: | process.name: - regex "^java" process.executable: - "/usr/bin/python2" - regex "\\System32\\svchost"Network interface filter

Growth drivers

- Number of network interfaces monitored

The configuration uses a simple pattern-matching mechanism that can look for interfaces that start with a specific sequence of letters or numbers following either pattern:

{name}[other characters][number]{name}[other characters], where you specify the name using theindex-1option

network_interface_filters: prefix: - dummy - lo index-1: - tunDefault network interface filters for Linux:

- Network interfaces that start with

dummy,lo,vmnet,sit,tun,tap, orveth - Network interfaces that contain

tunortap

Default network interface filters for Windows:

- Network interfaces that start with

Loop,isatap, orLocal

To override defaults include your own filter in the config file:

network_interface_filters: prefix: - dummy - lo index-1: - tunCustom attributes

Custom attributes are key-value pairs (similar to tags in other tools) used to annotate the data from the Infrastructure agent. You can use this metadata to build filter sets, group your results, and annotate your data. For example, you might indicate a machine's environment (staging or production), the service a machine hosts (login service, for example), or the team responsible for that machine.) are key-value pairs (similar to tags in other tools) used to annotate the data from the Infrastructure agent. You can use this metadata to build filter sets, group your results, and annotate your data. For example, you might indicate a machine's environment (staging or production), the service a machine hosts (login service, for example), or the team responsible for that machine.

Example of custom attributes from newrelic.yml

custom_attributes: environment: production service: billing team: alpha-teamThey are powerful and useful but if the data is not well organized or has become obsolete in any way you should consider streamling these.

Growth drivers

- Number of

podsandcontainersmonitored - Frequency and number of kube state metrics collected

- Logs generated per cluster

It's not surprising that a complex and decentralized system like Kubernetes has the potential to generate a lot of telemetry fast. There are a few good approaches to managing data ingest in Kubernetes. These will be very straightforward if you are using observability as code in your K8s deployments. We highly recommend you install this Kubernetes Data Ingest Analysis dashboard before making any decisions about reducing K8s ingest. The dashbaord is available in this quickstart.

Scrape interval

Depending on your observability objectives you may consider adjusting the scrape interval. The default is 15s. Now the Kubernetes cluster explorer only refreshes every 45s. If your primary use of the K8s data is to support the KCE visualizations you may consider changing your scrape interval to 20s. That change from 15s to 20s can have a substantial impact. See more details about managing scrape interval in our Helm configuration documentation.

Kube state metrics

The Kubernetes cluster explorer requires only the following kube state metrics (KSM):

Container dataCluster dataNode dataPod dataVolume dataAPI server data*Controller manager data*ETCD data*Scheduler data*

*Not collected in a managed Kubernetes environment (EKS, GKE, AKS, etc.)**Used in the default alert: “ReplicaSet doesn't have desired amount of pods”You may consider disabling some of the following:

DaemonSet dataDeployment dataEndpoint dataNamespace dataReplicaSet data**Service dataStatefulSet dataExample of updating state metrics in manifest (Deployment)

$[spec]$[template]$[spec]$[containers]$[name=kube-state-metrics]$[args]$ #- --collectors=daemonsets$ #- --collectors=deployments$ #- --collectors=endpoints$ #- --collectors=namespaces$ #- --collectors=replicasets$ #- --collectors=services$ #- --collectors=statefulsetsExample of updating state metrics in manifest (ClusterRole)

$[rules]$# - apiGroups: ["extensions", "apps"]$# resources:$# - daemonsets$# verbs: ["list", "watch"]$

$# - apiGroups: ["extensions", "apps"]$# resources:$# - deployments$# verbs: ["list", "watch"]$

$# - apiGroups: [""]$# resources:$# - endpoints$# verbs: ["list", "watch"]$

$# - apiGroups: [""]$# resources:$# - namespaces$# verbs: ["list", "watch"]$

$# - apiGroups: ["extensions", "apps"]$# resources:$# - replicasets$# verbs: ["list", "watch"]$

$# - apiGroups: [""]$# resources:$# - services$# verbs: ["list", "watch"]$

$# - apiGroups: ["apps"]$# resources:$# - statefulsets$# verbs: ["list", "watch"]Config lowDataMode in nri-bundle chart

Our helm charts support setting an option to reduce the amount of data ingested at the cost of dropping detailed information. To enable it, set global.lowDataMode to true in the nri-bundle chart.

lowDataMode affects three specific components of the nri-bundle chart:

- Increase infrastructure agent interval from

15to30seconds. - Prometheus OpenMetrics Integration will exclude a few metrics as indicated in the Helm doc below.

- Labels and annotations details will be dropped from logs.

You can find more details about this configuration in our Helm doc.

New Relic's on-host integrations (OHI for short) represent a diverse set of integrations for third party services such as Postgresql, MySQL, Kafka, RabbitMQ etc. It's not possible to provide every optimization technique in the scope of this document, but we can provide these generally applicable techniques:

Manage sampling rate

Manage those parts of the config that can increase or decrease breadth of collection

Manage those parts of the config that allow for custom queries

Manage the infrastructure agents' custom attributes because they'll be applied to all on-host integration data.

We'll use a few examples to demonstrate.

PostgreSQL integration

Growth drivers

- Number of tables monitored

- Number of indices monitored

The PostgreSQL on-host integration configuration provides these adjustable settings that can help manage data volume:

interval: Default is 15s

COLLECTION_LIST: list of tables to monitor (use ALL to monitor ALL)

COLLECT_DB_LOCK_METRICS: Collect

dblockmetricsPGBOUNCER: Collect

pgbouncermetricsCOLLECT_BLOAT_METRICS: Collect bloat metrics

METRICS: Set to

trueto collect only metricsINVENTORY: Set to

trueto enable only inventory collectionCUSTOM_METRICS_CONFIG: Config file containing custom collection queries

Sample config

integrations:- name: nri-postgresqlenv:USERNAME: postgresPASSWORD: passHOSTNAME: psql-sample.localnetPORT: 6432DATABASE: postgresCOLLECT_DB_LOCK_METRICS: falseCOLLECTION_LIST: '{"postgres":{"public":{"pg_table1":["pg_index1","pg_index2"],"pg_table2":[]}}}'TIMEOUT: 10interval: 15slabels:env: productionrole: postgresqlinventory_source: config/postgresqlKafka integration

Growth drivers

- Number of brokers in cluster

- Number of topics in cluster

The Kafka on-host integration configuration provides these adjustable settings that can help manage data volume:

interval: Default is 15s

TOPIC_MODE: Determines how many topics we collect. Options are

all,none,list, orregex.METRICS: Set to

trueto collect only metricsINVENTORY: Set to

trueto enable only inventory collectionTOPIC_LIST: JSON array of topic names to monitor. Only in effect if topic_mode is set to list.

COLLECT_TOPIC_SIZE: Collect the metric Topic size. Options are

trueorfalse, defaults tofalse.COLLECT_TOPIC_OFFSET:Collect the metric Topic offset. Options are

trueorfalse, defaults tofalse.It should be noted that collection of topic level metrics especially offsets can be resource intensive to collect and can have an impact on data volume. It is very possible that a cluster's ingest can increase by an order of magnitude simply by the addition of new Kafka topics to the cluster.

MongoDB Integration

Growth drivers

- Number of databases monitored

The MongoDB integration provides these adjustable settings that can help manage data volume:

interval: Default is 15s

METRICS: Set to

trueto collect only metricsINVENTORY: Set to

trueto enable only inventory collectionFILTERS: A JSON map of database names to an array of collection names. If empty, it defaults to all databases and collections.

For any on-host integration you use it's important to be aware of parameters like

FILTERSwhere the default is to collect metrics from all databases. This is an area where you can use your monitoring priorities to streamline collected data.Example configuration with different intervals for METRIC and INVENTORY

integrations:- name: nri-mongodbenv:METRICS: trueCLUSTER_NAME: my_clusterHOST: localhostPORT: 27017USERNAME: mongodb_userPASSWORD: mongodb_passwordinterval: 15slabels:environment: production- name: nri-mongodbenv:INVENTORY: trueCLUSTER_NAME: my_clusterHOST: localhostPORT: 27017USERNAME: mongodb_userPASSWORD: mongodb_passwordinterval: 60slabels:environment: productioninventory_source: config/mongodbElasticsearch integration

Growth drivers

- Number of nodes in cluster

- Number of indices in cluster

The Elasticsearch integration provides these adjustable settings that can help manage data volume:

interval: Default is 15s

METRICS: Set to

trueto collect only metricsINVENTORY: Set to

trueto enable only inventory collectionCOLLECT_INDICES: Signals whether to collect indices metrics or not.

COLLECT_PRIMARIES: Signals whether to collect primary metrics or not.

INDICES_REGEX: Filter which indices are collected.

MASTER_ONLY: Collect cluster metrics on the elected master only.

Example configuration with different intervals for METRIC and INVENTORY

integrations:- name: nri-elasticsearchenv:METRICS: trueHOSTNAME: localhostPORT: 9200USERNAME: elasticsearch_userPASSWORD: elasticsearch_passwordREMOTE_MONITORING: trueinterval: 15slabels:environment: production- name: nri-elasticsearchenv:INVENTORY: trueHOSTNAME: localhostPORT: 9200USERNAME: elasticsearch_userPASSWORD: elasticsearch_passwordCONFIG_PATH: /etc/elasticsearch/elasticsearch.ymlinterval: 60slabels:environment: productioninventory_source: config/elasticsearchJMX integration

Growth drivers

- Metrics listed in COLLECTION_CONFIGS

The JMX integration is inherently generic. It allows us to scrape metrics from any JMX instance. We have a good amount of control over what gets collected by this integration. In some enterprise New Relic environments JMX metrics reprepresent a relatively high proportion of all data collected.

The JMX integration provides these adjustable settings that can help manage data volume:

interval: Default is 15s

METRICS: Set to

trueto collect only metricsINVENTORY: Set to

trueto enable only inventory collectionMETRIC_LIMIT: Number of metrics that can be collected per entity. If this limit is exceeded the entity will not be reported. A limit of 0 implies no limit.

LOCAL_ENTITY: Collect all metrics on the local entity. Only used when monitoring localhost.

COLLECTION_FILES: A comma-separated list of full file paths to the metric collection definition files. For on-host install, the default JVM metrics collection file is at /etc/newrelic-infra/integrations.d/jvm-metrics.yml.

COLLECTION_CONFIG: Configuration of the metrics collection as a JSON.

It is the COLLECTION_CONFIG entries that most govern the amount of data ingested. Understanding the JMX model you are scraping will help you optimize.

COLLECTION_CONFIG example for JVM metrics

COLLECTION_CONFIG='{"collect":[{"domain":"java.lang","event_type":"JVMSample","beans":[{"query":"type=GarbageCollector,name=*","attributes":["CollectionCount","CollectionTime"]},{"query":"type=Memory","attributes":["HeapMemoryUsage.Committed","HeapMemoryUsage.Init","HeapMemoryUsage.Max","HeapMemoryUsage.Used","NonHeapMemoryUsage.Committed","NonHeapMemoryUsage.Init","NonHeapMemoryUsage.Max","NonHeapMemoryUsage.Used"]},{"query":"type=Threading","attributes":["ThreadCount","TotalStartedThreadCount"]},{"query":"type=ClassLoading","attributes":["LoadedClassCount"]},{"query":"type=Compilation","attributes":["TotalCompilationTime"]}]}]}'Omitting any one entry from that config such as

NonHeapMemoryUsage.Initwill have a tangible impact on the overall data volume collected.COLLECTION_CONFIG example for Tomcat metrics

COLLECTION_CONFIG={"collect":[{"domain":"Catalina","event_type":"TomcatSample","beans":[{"query":"type=UtilityExecutor","attributes":["completedTaskCount"]}]}]}Other on-host integrations

There are many other on-host integrations with configuration options that will help you optimize collection. Some commonly used ones are:

This is a good starting point to learn more.

Growth drivers

- Monitored devices driven by:

- hard configured devices

- CIDR scope in discovery section

- traps configured

This section focuses on New Relic's network performance monitoring which relies in the ktranslate agent from Kentik. This agent is quite sophisticated and it is important to fully understand the advanced configuration docs before major optimization efforts.

mibs_enabled: Array of all active MIBs the ktranslate docker image will poll. This list is automatically generated during discovery if the discovery_add_mibs attribute is true. MIBs not listed here will not be polled on any device in the configuration file. You can specify a SNMP table directly in a MIB file using MIB-NAME.tableName syntax. Ex: HOST-RESOURCES-MIB.hrProcessorTable.

user_tags: key:value pair attributes to give more context to the device. Tags at this level will be applied to all devices in the configuration file.

devices: Section listing devices to be monitored for flow

traps: configures IP and ports to be monitored with SNMP traps (default is 127.0.0.1:1162)

discovery: configures how endpoints can be discovered. Under this section the following parameters will do the most to increase or decrease scope:

- cidrs: Array of target IP ranges in CIDR notation.

- ports: Array of target ports to scan during SNMP polling.

- debug: Indicates whether to enable debug level logging during discovery. By default, it's set to

false - default_communities: Array of SNMPv1/v2c community strings to scan during SNMP polling. This array is evaluated in order and discovery accepts the first passing community.

To support filtering of data that does not create value for your observability needs, you can set the

global.match_attributes.{}and/ordevices.<deviceName>.match_attributes.{}attribute map.This will provide filtering at the ktranslate level, before shipping data to New Relic, giving you granular control over monitoring of things like interfaces.

view this page for more details.

Growth drivers

- Logs forwarded

- Average size of forward log records

Logs represent one of the most flexible sources of telemetry in that we are typically routing logs through a dedicated forwarding layer with its own routing and transform rules. Since there are a variety of forwarders we'll focus on the most commonly used ones:

Fluentd

Fluentbit

New Relic infrastructure agent (built-in Fluentbit)

Logstash

Forwarders generally provide a fairly complete routing workflow that includes filtering, and transformation.

New Relic's infrastructure agent provides some very simple patterns for filtering unwanted logs.

Regular expression for filtering records. Only supported for the tail, systemd, syslog, and tcp (only with format none) sources.

This field works in a way similar to grep -E in Unix systems. For example, for a given file being captured, you can filter for records containing either WARN or ERROR using:

- name: only-records-with-warn-and-error file: /var/log/logFile.log pattern: WARN|ERRORIf you have pre-written fluentd configurations for Fluentbit that do valuable filtering or parsing. It is possible to import those into our New Relic logging config. This is done by means of the config_file and parsers parameters.

config_file: path to an existing Fluent Bit configuration file. Note that any overlapping source results in duplicate messages in New Relic Logs.

parsers_file: path to an existing Fluent Bit parsers file. The following parser names are reserved: rfc3164, rfc3164-local and rfc5424.

Learning how to inject attributes (or tags) into your logs in your data pipeline and to perform transformations can help with downstream feature dropping using New Relic drop rules. By augmenting your logs with metadata about the source, we can make centralized and easily reversible decisions about what to drop on the backend. Some fairly obvious attributes that should be present on your logs in one form or another are:

Team

Environment (dev/stage/prod)

Application

Data center

Log level

Below are some detailed routing and filtering resources:

Growth drivers

- Number of metrics exported from apps

- Number of metrics transferred via remote write or POMI

New Relic provides two primary options for sending Prometheus metrics to NRDB. The best practices for managing metric ingest in this document will be focused primarily on Option 2, the Prometheus OpenMetrics Integration (POMI), as this component was created by New Relic.

Option 1: Prometheus Remote Write

Prometheus server scrape config options are fully documented here. These scrape configs determine which metrics are collected by Prometheus server and by configuring the remote_write parameter, the collected metrics can be written to NRDB via the New Relic Metrics API.

Option 2: Prometheus OpenMetrics Integration (POMI)

POMI is a standalone integration that scrapes metrics from both dynamically discovered and static Prometheus endpoints. POMI then sends this data to NRDB via the New Relic Metric API. This integration is ideal for customers not currently running Prometheus Server.

POMI: scrape label

POMI will discover any Prometheus endpoint containing the label or annotation “prometheus.io/scrape=true” by default. Depending upon what is deployed in the cluster, this can be a large number of endpoints and thus, a large number of metrics ingested.

It is suggested that the scrape_enabled_label parameter be modified to something custom (e.g. “newrelic/scrape”) and that the Prometheus endpoints be selectively labeled or annotated when data ingest is of utmost concern.

see nri-prometheus-latest.yaml for the latest reference config.

POMI config parameter

# Label used to identify scrapable targets. # Defaults to "prometheus.io/scrape" scrape_enabled_label: "prometheus.io/scrape"POMI will discover any Prometheus endpoint exposed at the node-level by default. This typically includes metrics coming from Kubelet and cAdvisor.

If you are running the New Relic Kubernetes Daemonset, it is important that you set require_scrape_enabled_label_for_nodes: true so that POMI does not collect duplicate metrics.

The endpoints targeted by the New Relic Kubernetes Daemonset are outlined here.

POMI: scrape label for nodes

POMI will discover any Prometheus endpoint exposed at the node-level by default. This typically includes metrics coming from Kubelet and cAdvisor.

If you are running the New Relic Kubernetes Daemonset, it is important that you set require_scrape_enabled_label_for_nodes: true so that POMI does not collect duplicate metrics.

The endpoints targeted by the New Relic Kubernetes Daemonset are outlined here.

POMI config parameters

# Whether k8s nodes need to be labeled to be scraped or not. # Defaults to false. require_scrape_enabled_label_for_nodes: falsePOMI: Co-existing with nri-kubernetes

New Relic's Kubernetes integration collects a number of metrics OOTB, however, it does not collect every possible metric available from a Kubernetes Cluster.

In the POMI config, you'll see a section similar to this which will disable metric collection for a subset of metrics that the New Relic Kubernetes integration is already collecting from Kube State Metrics.

It's also very important to set require_scrape_enabled_label_for_node: true so that Kubelet and cAdvisor metrics are not duplicated.

POMI config parameters

transformations: - description: "Uncomment if running New Relic Kubernetes Integration" ignore_metrics: - prefixes: - kube_daemonset_ - kube_deployment_ - kube_endpoint_ - kube_namespace_ - kube_node_ - kube_persistentvolume_ - kube_persistentvolumeclaim_ - kube_pod_ - kube_replicaset_ - kube_service_ - kube_statefulset_POMI: request/limit settings

When running POMI, it's recommended to apply the following resource limits for clusters generating approximately 500k DPM:

- CPU limit: 1 core (1000m)

- Memory limit: 1Gb 1024 (1G)

The resource request for CPU and Memory should be set at something reasonable so that POMI receives enough resources from the cluster. Setting this to something extremely low (e.g. cpu: 50m) can result in cluster resources being consumed by “noisy neighbors”.

An example query for determining the DPM (post-ingest) can be found here.

POMI config parameter

…

spec: serviceAccountName: nri-prometheus containers: - name: nri-prometheus image: newrelic/nri-prometheus:2.2.0 resources: requests: memory: 512Mi cpu: 500m limits: memory: 1G cpu: 1000m

…POMI: estimating DPM and cardinality

Although cardinality is not associated directly with billable per GB ingest, New Relic does maintain certain rate limits on cardinality and data points per minute. Being able to visualize cardinality and DPM from a Prometheus cluster can be very important.

Tip

Trial and paid accounts receive a 1M DPM and 1M cardinality limit for trial purposes, but you can request up to 15M DPM and 15M cardinality for your account. To request changes to your metric rate limits, contact your New Relic account representative. View this doc for infor.

If you’re already running Prometheus Server, you can run DPM and cardinality estimates there prior to enabling POMI or remote_write.

Data points per minute (DPM)

rate(prometheus_tsdb_head_samples_appended_total[10m]) * 60

Top 20 metrics (highest cardinality)

topk(20, count by (name, job)(-1))

Growth drivers

- Number of metrics exported per integration

- Polling frequency (for polling based integration)

New Relic cloud integrations get data from cloud providers' APIs. Data is generally collected from monitoring APIs such as AWS CloudWatch, Azure Monitor, and GCP Stackdriver, and inventory metadata is collected from the specific services' APIs. Moreover, our integrations get their data from streaming metrics that are pushed via a streaming service such as AWS Kinesis.

Polling API-based integrations

If you want to report more or less data from your cloud integrations, or if you need to control the use of the cloud providers' APIs to prevent reaching rate and throttling limits in your cloud account, you can change the configuration settings to modify the amount of data they report. The two main controls are:

Examples of business reasons for wanting to change your polling frequency include:

Billing: If you need to manage your AWS CloudWatch bill, you may want to decrease the polling frequency. Before you do this, make sure that any alert conditions set for your cloud integrations are not affected by this reduction.

New services: If you are deploying a new service or configuration and you want to collect data more often, you may want to increase the polling frequency temporarily.

Caution

Changing the configuration settings for your integrations may impact alert conditions and chart trends.

View this doc for more details.

Streaming or Pushed Metrics

More and more cloud integrations are offering the option of having the data pushed via a streaming service. This has proven to cut down on latency drastically. One issue some users have observed is that it's not as easy to control volume since sampling rate cannot be configured.

Drop rules will be well covered in the next section. They are the primary way of filtering out streaming metrics that are too high volume. However there are some things that can be done on the cloud provider side to limit the stream volume somewhat.

For example in AWS it's possible to use condition keys to limit access to CloudWatch* namespaces.

The following policy limits the user to publishing metrics only in the namespace named MyCustomNamespace.

{"Version": "2012-10-17","Statement": {"Effect": "Allow","Resource": "*","Action": "cloudwatch:PutMetricData","Condition": {"StringEquals": {"cloudwatch:namespace": "MyCustomNamespace"}}}}The following policy allows the user to publish metrics in any namespace except for CustomNamespace2.

{"Version": "2012-10-17","Statement": [{"Effect": "Allow","Resource": "*","Action": "cloudwatch:PutMetricData"},{"Effect": "Deny","Resource": "*","Action": "cloudwatch:PutMetricData","Condition": {"StringEquals": {"cloudwatch:namespace": "CustomNamespace2"}}}]}

If you can query it you can drop it

Drop filter rules help you accomplish several important goals:

Lower costs by storing only the logs relevant to your account.

Protect privacy and security by removing personal identifiable information (PII).

Reduce noise by removing irrelevant events and attributes.

A note of caution

When creating drop rules, you are responsible for ensuring that the rules accurately identify and discard the data that meets the conditions that you have established. You are also responsible for monitoring the rule, as well as the data you disclose to New Relic. Always test and retest your queries and after the drop rule was installed, make sure it works as intended. Creating a dashboard to monitor your data pre and post drop will help.

All New Relic drop rules are implemented by the same backend data model and API. However New Relic log management provides a powerful UI that makes it very easy to create and monitor drop rules.

In our previous section on prioritizing telemetry we ran through some exercises to show ways in which we could deprecate certain data. Let's revisit this example:

Omit debug logs (knowing they can be turned on if there is an issue) (saves 5%)Method 1: Log UI

- Identify the logs we care about using a filter in the Log UI:

level: DEBUG - Make sure it finds the logs we want to drop

- Check some alternative syntax such as

level:debugandlog_level:Debug. These variations are common. - Under Manage Data click Drop filters and create and enable a filter named 'Drop Debug Logs'

- Verify the rule works

Method 2: Our NerdGraph API

- Create the relevant NRQL query:

SELECT count(*) FROM Log WHERElevel= 'DEBUG' - Make sure it finds the logs we want to drop

- Check variations on the attribute name and value (Debug vs DEBUG)

- Execute the following NerdGraph statement and make sure it works:

mutation {nrqlDropRulesCreate(accountId: YOUR_ACCOUNT_ID, rules: [{action: DROP_DATAnrql: "SELECT * FROM Log WHERE `level` = 'DEBUG'"description: "Drops DEBUG logs. Disable if needed for troubleshooting."}]){successes { id }failures {submitted { nrql }error { reason description }}}}Let's implement the recommendation:

Drop process sample data in DEV environments.Create the relevant query: 'SELECT * FROM ProcessSample WHERE

env= 'DEV''Make sure it finds the process samples we want to drop

Check for other variations on

envsuch asENVandEnvironmentCheck for various of

DEVsuch asDevandDevelopmentUse our NerdGraph API to execute the following statement and make sure it works:

mutation {nrqlDropRulesCreate(accountId: YOUR_ACCOUNT_ID, rules: [{action: DROP_DATAnrql: "SELECT * FROM ProcessSample WHERE `env` = 'DEV'"description: "Drops ProcessSample from development environments"}]){successes { id }failures {submitted { nrql }error { reason description }}}}

In some cases we can economize on data where we have redundant coverage. For example: in an environment where you have the AWS RDS integration running as well as the New Relic on-host integration you may be able to discard some overlapping metrics.

For quick exploration we can run a query like this:

FROM Metric select count(*) where metricName like 'aws.rds%' facet metricName limit maxThat will show us all metricNames matching the pattern.

We see from the results there is a high volume of metrics of the pattern

aws.rds.cpu%. Let's drop those since we have other instrumentation for those.- Create the relevant query: 'FROM Metric select * where metricName like 'aws.rds.cpu%' facet metricName limit max since 1 day ago'

- Make sure it finds the process samples we want to drop

- Use the NerdGraph API to execute the following statement and make sure it works:

mutation {nrqlDropRulesCreate(accountId: YOUR_ACCOUNT_ID, rules: [{action: DROP_DATAnrql: "FROM Metric select * where metricName like 'aws.rds.cpu%' facet metricName limit max since 1 day ago"description: "Drops rds cpu related metrics"}]){successes { id }failures {submitted { nrql }error { reason description }}}}One powerful thing about drop rules is that we can configure a rule that drops specific attributes but maintains the rest of the data intact. Use this to remove private data from NRDB, or to drop excessively large attributes. For example, stack traces or large chunks of JSON in log records can sometimes be very large.

To set these drop rules, change the action field to

DROP_ATTRIBUTESinstead ofDROP_DATA.mutation {nrqlDropRulesCreate(accountId: YOUR_ACCOUNT_ID, rules: [{action: DROP_ATTRIBUTESnrql: "SELECT stack_trace, json_data FROM Log where appName='myApp'"description: "Drops large fields from logs for myApp"}]){successes { id }failures {submitted { nrql }error { reason description }}}}Caution

Use this approach carefully, and only in situations where there are no other alternatives, since it can alter statistical inferrences made from your data. However, for events with massive sample size, you may do with only a portion of your data as long as you understand the consequences.

In this example we'll take advantage of the relative distribution of certain trace ids to approximate random sampling. We can use the

rlikeoperator to check for the leading values of a span'strace.idattribute.The following example could drop about 25% of spans.

SELECT * FROM Span WHERE trace.id rlike r'^[0-3].*' and appName = 'myApp'Useful expressions include:

^0.*approximates 6.25%^[0-1].*approximates 12.5%^[0-2].*approximates 18.75%^[0-3].*approximates 25.0%

See an example of a full mutation:

mutation {nrqlDropRulesCreate(accountId: YOUR_ACCOUNT_ID, rules: [{action: DROP_ATTRIBUTESnrql: "SELECT * FROM Span WHERE trace.id rlike r'^[0-3].*' and appName = 'myApp'"description: "Drops approximately 25% of spans for myApp"}]){successes { id }failures {submitted { nrql }error { reason description }}}}The preceding examples should show you all you need to know to use these techniques on any other event or metric in NRDB. If you can query it you can drop it. Reach out to New Relic if you have questions about the precise way to structure a query for a drop rule.

- Identify the logs we care about using a filter in the Log UI:

Exercise

Answering the following questions will help you develop confidence in your ability to develop and execute optimization plans. You may want to use the Data Ingest Baseline and Data Ingest Entity Breakdown dashboards from the Baselining section. Install those dashboards as described and see how many of these questions you can answer.

| Questions |

|---|

| Show three drop rules in which you could reduce this organization's ingest by at least 5% per month? Include the nerdgraph syntax for your drop rule in your response. |

| Suggest three instrumentation configuration changes you could implement to reduce this organization's ingest by at least 5% per month? Include the configurations snippets in your response. |

| What are three things you could do to reduce data volume from K8s monitoring? How much data reduction could you achieve? What are potential trade-offs of this reduction (i.e., would they lose any substantial observability)? |

| 1. Use the Data Governance Baseline dashboard to identify an account that’s sending a large amount of log data to New Relic. 2. Find and select that account from the account dropdown menu. 3. Navigate to the logs page of the account and select patterns from the left-side menu. 4. Review the log patterns shown and give some examples of the low value log patterns. What makes them low value? How much total reduction you could achieve by dropping these logs? |

| Based on your overall analysis of this organization, what are telemetry is underutilized? |

Conclusion

The process section showed us how to associate our telemetry with specific observability value drivers or objectives. This can make the hard decision of optimizing our account ingest somewhat easier. We learned how to desribe a high level optimization plan that optimizes ingest while protecting our objectives. Finally we were introduced a rich set of recipes for configuration and drop rule based ingest optimizations.